In the race to build the next generation of AI products, we are once again becoming obsessed with speed. We talk about model performance, inference costs, edge deployment, and how quickly AI can move from prototype to production. But as AI expands into connected devices and physical environments, we are overlooking the one factor that […] The post The next AI race is in the physical world appeared

In the race to build the next generation of AI products, we are once again becoming obsessed with speed. We talk about model performance, inference costs, edge deployment, and how quickly AI can move from prototype to production. But as AI expands into connected devices and physical environments, we are overlooking the one factor that will determine whether this market truly scales: trust.

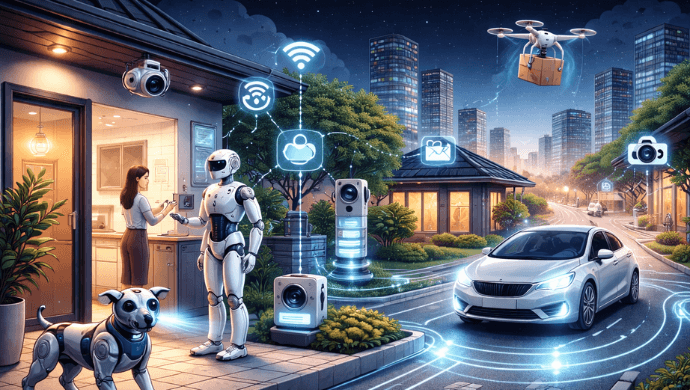

The next AI race will not be won only in software. It will be won in the physical world. That matters because the physical world has far less tolerance for failure.

A weak chatbot answer can be frustrating. A weak AI IoT experience can disrupt a live interaction, miss a critical signal, raise safety concerns, or cause users to lose confidence in the system altogether. In this new era, intelligence may create excitement, but trust is what makes adoption real.

This is the same broader pattern I wrote about in my earlier piece: when innovation outpaces governance, the result is not just risk, but friction, and trust becomes the factor that determines whether a company scales or stalls. The new innovation paradox AI is no longer confined to typing boxes and copilots. It is moving into devices that listen, respond, sense, and act.

That includes wearables, toys, cameras, robots, kiosks, industrial systems, and voice-first interfaces that operate in real time. That shift creates a new paradox. AI has made it easier than ever to build quickly.

But when AI enters connected devices and live environments, speed without trust becomes a liability. Enterprises hesitate. Security reviews get longer.

Privacy questions get harder. Customers stop asking only whether the system is smart and start asking whether it is safe, reliable, and resilient under real conditions. The next AI race is in the physical world.

The companies that lead in AI IoT will not simply have the best models. They will have the most trusted systems. Also Read: It’s not the chatbot but the access: Why AI agents are the real threat From model quality to system confidence For the past few years, most AI discussions have centred on the model.

Which model is better? Which is cheaper? Which is faster? But in AI IoT, the model is only one part of the product.

What matters just as much is whether the full system works in a noisy, unpredictable, and continuous world. Can it hear clearly in difficult environments? Can it respond fast enough to feel natural?

Can it recover when connectivity degrades? Can it handle real-time interruptions? Can it protect data across devices, networks, and regions with different compliance requirements?

Those are not secondary questions. In AI IoT, they are the product. This is where trust stops being a vague brand attribute and becomes an engineering discipline.

Trust means the system behaves in a way people can rely on, even when conditions are imperfect. Why trust gets harder in the physical world The challenge with AI IoT is that every layer matters at once. You are not just managing model behaviour.

You are managing device identity, audio quality, latency, packet loss, edge conditions, privacy controls, infrastructure resilience, and the live user experience. A strong model cannot compensate for a system that feels delayed, fragile, or inconsistent in the moment. That is why AI IoT raises the standard so dramatically.

Users do not experience these systems as separate layers. They experience them as one thing. If one part breaks trust, the whole system loses credibility.

In the physical world, trust is judged in real time. Also Read: Why inclusive AI is the next frontier of product strategy Where is the market going? You can already see this shift in how leading real-time platforms are evolving.

Agora’s earlier trust-by-design framing emphasised privacy by default, sovereignty-as-a-service, and encrypted integrity as the foundation for scaling real-time systems with confidence. That same logic now extends into AI IoT. Agora’s Conversational AI Engine is built around a practical reality: for AI to work in real-world voice and device experiences, it has to perform under difficult conditions.

This includes support for connecting to different LLMs, reduced response delay, real-time interruption handling, background-noise suppression, selective attention to the primary speaker, and performance under challenging network conditions. The relevance here is less about the product itself and more about what it reflects. The next phase of AI is not just about model-centric capability.

It is about infrastructure-centric confidence. In physical-world applications, what matters is not only what the model can generate, but whether the full experience is natural, responsive, and dependable at scale. Security is no longer the handbrake In the enterprise world, security used to be treated as the final checkpoint.

Now it is often the first serious buying filter. That was true in the broader AI wave, and it becomes even more true in AI IoT, where failures are more